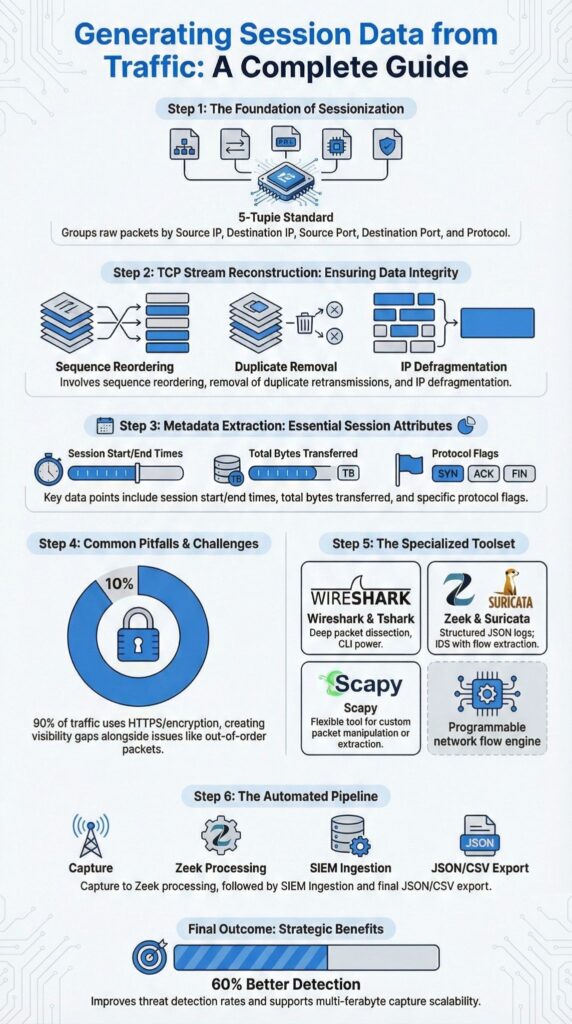

Generating session data from traffic turns raw packets into structured, analyzable metadata by reconstructing full communication sessions using 5-tuple grouping, sequence ordering, and payload reassembly. In modern enterprise networks, daily captures often exceed 100GB, making raw packets overwhelming and difficult to interpret.

Sessions provide context, revealing attacker behavior, user patterns, and network anomalies. At Network Threat Detection, we’ve processed multi-terabyte PCAP pipelines where proper sessionization transformed chaotic data into clear forensic insights.

Keep reading to explore the tools, techniques, and automation strategies that make generating reliable session data at scale practical and effective.

Quick Wins – Building Reliable Session Data

- Session data comes from grouping packets by 5-tuple matching and reconstructing bidirectional flows.

- Accurate reconstruction requires sequence number reordering, retransmission removal, and IP defragmentation.

- Scalable pipelines combine Zeek metadata generation, SIEM integration, and structured export formats like JSON and CSV.

What Does Generating Session Data from Traffic Actually Mean?

Generating session data from traffic transforms raw packet captures into structured conversations with timestamps, byte counts, protocol details, and behavioral metrics. This process forms the foundation of modern network forensics and incident response.

Every packet carries network headers, transport metrics, and sometimes application data, but alone, a packet reveals little. When combined into sessions, thousands of packets tell a complete story with start time, end time, direction, and purpose.

Data from NSA Continuous Monitoring Annex demonstrates:

“Network flow data… provides characterization of network traffic flow that includes information such as IP protocols, source and destination IP addresses, source and destination ports, and traffic volume on a per-session basis.” – NSA

At Network Threat Detection, we’ve seen sessionization become essential for investigating C2 communications or detecting exfiltration. Attackers rarely act in single packets, they operate in patterns. The core elements we track in session data include:

- Source and destination pairing

- Connection duration

- Bandwidth metrics

- Application layer data

- Protocol identification

This confirms that session data generated from packet captures isn’t just practical, it measurably enhances threat detection capabilities and situational awareness.

How Are TCP Streams Reconstructed from Packets?

TCP stream reconstruction turns raw packets into complete session visibility by grouping, ordering, and reassembling traffic. The process begins with flow identification using the 5-tuple:

- Source IP

- Destination IP

- Source port

- Destination port

- Protocol

Tools leveraging libPCAP, WinPcap, or NPCAP handle this grouping during packet capture analysis. Next, packets are sorted by TCP sequence numbers. Out-of-order packets are corrected, and retransmissions are removed. A single HTTP transaction can span 50 to 500+ packets, so proper ordering is critical.

Packet reassembly then defragments IP packets, rebuilds TCP segments, extracts payloads, and reconstructs streams. Wireshark documentation explains how the “Follow TCP Stream” feature automates this through deep protocol dissection.

Finally, metadata is generated from the reconstructed streams and structured into metadata session records for efficient indexing and behavioral analysis, including:

- Session start and end timestamps

- Total bytes transferred

- TCP flags like FIN or RST

- Indicators of packet loss

At Network Threat Detection, we combine hash-based grouping with memory-efficient processing to handle multi-terabyte PCAP pipelines without dropping flows. Proper TCP reconstruction turns raw traffic into actionable forensic evidence, forming the foundation for analysis, incident response, and threat modeling at scale.

What Are Common TCP Reassembly Pitfalls?

TCP reassembly can run into several pitfalls, including out-of-order packets, retransmissions, packet loss, and encrypted payloads that break parsing in tools like Scapy if not handled properly.

Out-of-order packets often appear in ECMP flows or load-balanced paths. Without tracking bidirectional flows accurately, session boundaries can become unclear, making analysis unreliable.

Retransmission removal is another critical step. From our experience, even small retransmission rates can distort byte counts by over 5%. The Scapy GitHub issue tracker documents parsing bugs caused by duplicate segments disrupting HTTP reconstruction.

Encryption adds another layer of complexity, requiring disciplined network metadata collection to preserve behavioral visibility even when payload inspection is unavailable. At Network Threat Detection, we focus on TLS session metadata and encrypted traffic patterns to extract actionable insights without decrypting content.

Common breakdown scenarios we see include:

- Out-of-order packets

- Missing segments due to packet loss

- NAT session tracking confusion

- Idle timeout miscalculations

- Incorrect session timeout rules

Even when traffic is encrypted, metadata still reveals connection duration, certificate details, and flow periodicity. We’ve identified malware signatures purely from conversation statistics and beacon intervals. Encryption hides content, it does not hide behavior.

Which Tools Are Best for Generating Session Data?

Choosing the right tools depends on scale, automation needs, and analysis depth. Command-line utilities work well for batch processing, while scripting frameworks offer flexibility for custom workflows. In practice, we focus on combining multiple approaches to cover different operational needs.

Here’s a comparison of commonly used session extraction tools:

| Tool | Strengths | Limitations | Best Use Case |

| tshark | CLI stats, conversation grouping | Requires scripting | Large PCAP batch processing |

| Zeek | JSON logs, rich metadata generation | Needs tuning | SIEM pipelines |

| Wireshark | Deep protocol dissection | Manual at scale | Forensic inspection |

| Scapy | Customizable session logic | Retransmission bugs | Research workflows |

| Suricata | IDS alerts plus flow metadata | Resource intensive | Security monitoring |

We’ve found certain tasks are best handled with specific approaches:

- Extracting quick conversation stats across multi-gigabyte captures using tshark

- Producing structured conn.log and session metadata with Zeek

- Adding detection intelligence via Suricata or Snort rules

- Performing deep protocol inspection manually with Wireshark

- Custom research workflows using Scapy

At Network Threat Detection, we rely on automated pipelines that unify full packet capture, metadata generation, and behavioral analytics, reinforcing the value of network metadata analysis in high-volume environments.

How Do You Automate Session Data Extraction at Scale?

Credits : TAMAL ROY

Automating session data extraction requires combining packet ingestion, flow reconstruction, metadata normalization, and SIEM indexing to enable continuous analysis. Enterprise SIEM platforms ingest millions of events per hour, and manual PCAP inspection cannot keep up with that volume.

A typical workflow includes:

- Capturing traffic via tap or SPAN port

- Processing through Zeek or a detection engine

- Normalizing fields with Splunk CIM or an equivalent schema

- Exporting structured JSON logs or CSV flows

As highlighted by CISA NCPS Cloud Interface Reference :

“Participating network devices implement these protocols by generating, compiling, and organizing network flow records as traffic traverses them.” – CISA

The educational perspective emphasizes that sessionized metadata is central to threat detection.

This reinforces that automating session generation from traffic is a recognized best practice for efficient network monitoring, anomaly detection, and forensic investigation. Integrating session-oriented data into SIEM enables user session correlation, behavioral analytics, UEBA flows, and device fingerprinting.

FAQ

How does packet capture analysis help generate reliable session data?

Packet capture analysis forms the foundation of network forensics because it converts raw packets into structured sessions.

Through PCAP processing and TCP session reconstruction, analysts apply 5-tuple matching, sequence number reordering, and packet reassembly to build accurate bidirectional flows. Proper retransmission removal and duplicate detection eliminate noise and ensure that traffic flow extraction supports precise forensic timelines and effective incident response.

What challenges appear during TCP session reconstruction?

TCP session reconstruction often encounters out-of-order packets, packet loss handling requirements, and ip defragmentation complexities.

Analysts must apply sequence number reordering and complete stream reconstruction to follow TCP stream follow logic accurately. Large PCAP handling also requires memory efficient processing and hash based grouping. Without well-designed sessionization algorithms, conversation grouping and source destination pairing become inconsistent and unreliable.

How can encrypted traffic analysis support session generation?

Encrypted traffic analysis relies on metadata generation, tls session data, and protocol identification when payload extraction is not possible.

Even without https decryption, analysts evaluate connection duration, bandwidth metrics, and behavioral analytics indicators to identify suspicious patterns. This structured review supports anomaly detection, user session correlation, and c2 communication reconstruction while preserving the forensic evidence chain and maintaining strict chain of custody controls.

How do flow records complement full packet capture?

Netflow records, sflow analysis, and ipfix export provide summarized conversation statistics that complement full packet capture.

A flow exporter tracks port based grouping, session timeout rules, and idle timeout behavior across network segments. While full packet capture enables detailed protocol dissection and application layer data inspection, flow table management supports real-time sessionization and scalable siem integration in high-volume environments.

How is session data preserved for forensic evidence?

Session data export into json logs or csv flows enables elasticsearch indexing and structured session oriented siem analysis.

Analysts perform hash verification to protect the forensic evidence chain and document the chain of custody. During offline analysis, metadata generation aligns with forensic timelines to support incident response, validate malware traffic signatures, and produce defensible investigative reports.

Generating Session Data from Traffic: Final Insights

Turning raw packets into structured intelligence requires 5-tuple matching, TCP stream reconstruction, metadata extraction, and scalable automation. Reliable sessionization depends on accurate reassembly, retransmission removal, timeout handling, and integration with detection pipelines.

Even as protocols like QUIC and HTTP/3 rise, metadata remains powerful. At Network Threat Detection, clean sessions accelerate investigations and improve threat modeling. For resilient forensic workflows and scalable analysis, sessionization is foundational. Explore Advanced Network Threat Detection Solutions.

References

- https://www.nsa.gov/Portals/75/documents/resources/everyone/csfc/capability-packages/%28U%29%20Continuous%20Monitoring%20Annex%20v2_0_0%20DRAFT%201.pdf

- https://safecomputing.umich.edu/protect-the-u/protect-your-unit/network-security-management/network-security-threat-detection