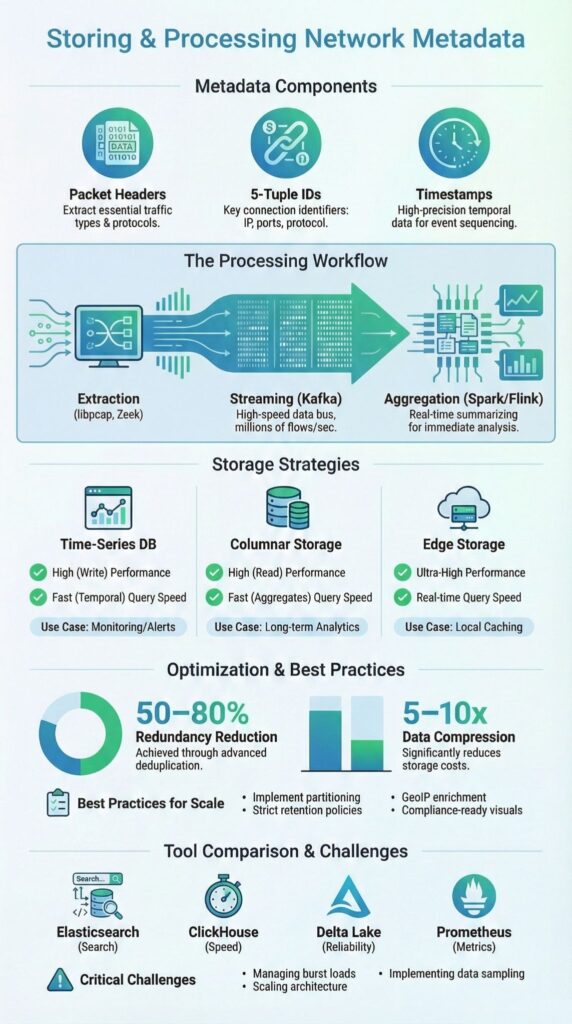

Storing processing network metadata means capturing packet headers, flow records, timestamps, and IP addresses without saving full payloads. This approach provides visibility into traffic patterns, security events, and anomalous behavior while keeping storage and processing demands manageable.

In our experience with Network Threat Detection, prioritizing metadata-first strategies allows teams to scale monitoring across multi-gigabit networks, reduce costs, and maintain real-time insights.

Combining structured databases, columnar storage, and streaming pipelines ensures efficient processing and rapid query performance. Keep reading to explore practical storage architectures, high-throughput processing workflows, and best practices for managing network metadata effectively.

Quick Wins – Scaling with Metadata-First Architecture

- Metadata-first strategies enable efficient security monitoring, forensics, and performance analysis.

- Combining time-series, columnar, and edge storage balances speed, cost, and scalability.

- Streaming pipelines and indexing techniques allow sub-second queries on billions of network flows.

What Is Network Metadata and Why Does It Matter?

Network metadata captures packet headers, 5-tuple identifiers (source and destination IPs, ports, protocol), timestamps, and flow durations, without storing payloads. This makes it far smaller than full packet capture, often just 1–10% of traffic volume, yet still rich with actionable insights for cybersecurity, anomaly detection, and performance monitoring.

As highlighted by MITRE D3FEND

“Network protocol metadata is first collected and processed in real‑time or post‑facto.” – MITRE D3FEND

In our experience with Network Threat Detection, metadata-first strategies scale much better than payload-heavy approaches. By storing only headers and flow records, we can query billions of flows quickly while keeping sensitive payloads private.

Key use cases include:

- Cybersecurity detection – spotting malware, lateral movement, and DDoS patterns

- Digital forensics – reconstructing attack timelines using Zeek logs or Suricata metadata

- Performance monitoring – tracking top talkers, bandwidth usage, and latency spikes

We’ve seen that metadata-focused storage reduces RAM and disk overhead, supports time-series databases, enables protocol buffer pipelines, and allows compliance-aligned retention policies, all while preserving high-fidelity threat visibility and operational performance.

What Are the Best Storage Options for Network Metadata?

Choosing storage for network metadata depends on query patterns, retention requirements, and operational speed. In our experience with Network Threat Detection, combining multiple storage types provides the best balance between performance, cost, and scalability.

Insights from Wikipedia

“A metadata repository is a database created to store metadata.” – Wikipedia

Time-series databases like InfluxDB and TimescaleDB handle high-velocity NetFlow and packet metadata. They automatically partition by time and enable sub-second queries. We use these for:

- Real-time anomaly detection

- Operational dashboards for SOC teams

- Alerting on abnormal traffic patterns

Columnar storage such as Parquet or Delta Lake on object storage (S3) compresses data to 1–10% of raw size. This is ideal for:

- Historical forensics and deep packet metadata analytics

- Machine learning feature extraction

| Storage Option | Strengths | Weaknesses | Best Fit |

| InfluxDB | High write throughput, temporal queries | Limited complex joins | Real-time metrics |

| Parquet on S3 | 1–10% storage size, cost-efficient | Batch-oriented | Historical analytics |

| SQLite | Minimal footprint, local access | Limited concurrency | Edge logging |

| Redis | Sub-ms query speed | Memory-bound | Active sessions |

We combine these strategies to ensure scalable, metadata-first Network Threat Detection, using time-series DBs for live monitoring, columnar storage for historical analysis, and edge caching for immediate local insights.

How Do You Process Network Metadata at Scale?

Processing network metadata at scale requires careful extraction, normalization, and streaming analytics. We often begin with packet capture using libPCAP or Zeek, producing structured outputs in JSON or Protocol Buffers.

Flow exporters such as NetFlow, IPFIX, or sFlow provide complementary visibility across network segments.

Standardizing the data ensures consistency, enabling downstream pipelines to operate efficiently and reducing errors in aggregation or threat detection, particularly when utilizing network metadata session records to reconstruct session behavior and bidirectional flow timelines.

Extraction & Normalization:

- Packet capture metadata extraction

- Flow export using NetFlow, IPFIX, or sFlow sampling

- Standardize data in JSON or Protocol Buffers for consistent parsing

Streaming & Aggregation:

- Kafka handles high-velocity ingestion of millions of flows per second

- Spark or Flink performs windowed aggregation for anomaly detection, top talkers, and burst load analysis

- Example: track top 10 talkers per five-minute window using 5-tuple indexing

Optimization Techniques:

- Deduplication with Bloom filters reduces redundant flows by 50–80%

- Indexing by 5-tuple and timestamp partitions enables sub-second queries

- Compression with Snappy or Zstd balances CPU overhead and storage efficiency

By combining extraction, normalization, streaming, and optimization, we ensure scalable, real-time Network Threat Detection, providing actionable insights even in multi-gigabit enterprise networks.

What Are the Best Practices for Scalable Metadata Storage?

Scalable network metadata storage requires balancing performance, compliance, and operational flexibility. We often start by partitioning data by time, daily or hourly buckets, and by source, which prevents shard growth beyond practical limits like 1TB per shard.

Retention policies are critical: a 90-day hot store combined with a one-year cold archive allows both fast queries and historical forensic analysis while complying with GDPR-style requirements.

Compression strategies such as Snappy or Zstd reduce storage overhead by 5–10x, and we routinely apply enriching metadata with context during ingestion, such as GeoIP tagging and threat intelligence correlation, to prepare flows for anomaly detection or ML feature extraction.

Edge storage with lightweight formats like Delta or SQLite supports small writes and metrics collection closer to the source.

Key practices include:

- Time-based partitioning to manage shard size

- Compression using Snappy or Zstd

- Enrichment for anomaly detection and ML features

- Edge storage for lightweight metrics

- Retention policies that balance compliance and accessibility

We have found that combining columnar storage approaches with time-series databases creates a flexible infrastructure that scales for both real-time dashboards and batch analytics.

How Do Leading Tools Compare for Querying and Analytics?

Choosing the right platform depends on the type of metadata workload, retention needs, and query patterns, especially when aligned with structured data sources collection strategies that define ingestion scope and indexing models.

In our experience, different tools serve distinct purposes: Elasticsearch provides fast full-text search and real-time dashboards, though volumes beyond 1TB/day can strain memory.

We recommend combining tools to balance performance and scalability. For instance, Elasticsearch can power dashboards, ClickHouse handles large-scale analytics, and Delta Lake supports forensic replay. Prometheus complements them with near-real-time monitoring, reducing operational blind spots. Key operational strategies include:

- Prioritizing tools based on query type: real-time vs batch

- Using columnar stores for historical analytics

- Integrating monitoring pipelines for alerting

- Aligning tool selection with cost and infrastructure constraints

| Tool | Strengths | Weaknesses | Ideal Use Case |

| Elasticsearch | Full-text search, dashboards | High RAM, slow >1TB/day | Real-time dashboards |

| ClickHouse | Ultra-fast OLAP queries | Complex setup | Historical & ML queries |

| Delta Lake | ACID + versioning | Spark dependency | Data lakes & replay |

| Prometheus | Lightweight metrics | Not raw flow storage | Monitoring metadata |

What Challenges Arise in High-Velocity Networks?

Credits : Caringo

High-throughput networks, 10Gbps and above, generate massive metadata volumes that can strain both storage and processing pipelines.

In our experience, sampling strategies such as 1:1000 flows or leveraging NetFlow/IPFIX exporters reduce ingestion pressure while maintaining critical visibility. Auto-scaling stream processors handle bursts efficiently, and small Delta table writes or bulk Iceberg uploads optimize throughput without blocking real-time analytics.

Key challenges include:

- Metadata bloat and storage overhead

- RAM and CPU balancing during peak traffic

- Burst load mitigation with auto-scaling clusters

- Choosing between JSON flow exports versus bulk Iceberg uploads

| Strategy | Strengths | Weaknesses | Ideal Use Case |

| Flow Sampling | Reduces ingestion load | May skip rare events | Real-time monitoring |

| Auto-Scaling Streams | Handles bursts efficiently | Cluster management overhead | High-velocity networks |

| Delta Table Writes | Low-latency small inserts | Requires careful partitioning | Streaming analytics |

| Iceberg Bulk Uploads | High-throughput batch writes | Latency for real-time | Historical & forensic storage |

We have found that combining these approaches ensures Network Threat Detection remains effective and resilient, even under extreme traffic conditions, while preserving query performance and forensic readiness.

FAQ

What is the best approach for network metadata storage and processing network flows efficiently?

Efficient network metadata storage requires structured storage solutions combined with strategies for high-ingestion rates db.

Using a time-series db network or flow records database helps manage 10Gbps metadata bloat. Partitioning by time, shard management network, and retention policy network ensure scalability. Compressed flow size with Snappy compression net or Zstd metadata reduces storage costs without affecting processing network flows or query latency flows.

How can packet header capture and NetFlow collection improve network monitoring?

Packet header capture using Wireshark PCAP analysis or libPCAP metadata extract provides detailed traffic insights.

NetFlow collection, sFlow sampling, and IPFIX export feed flow records databases, enabling Spark network processing, Flink flow aggregation, or ML features flows. Deduplication network data and 5-tuple indexing improve accuracy while keeping RAM usage flows efficient and supporting real-time analysis of network behavior.

What are effective storage formats for Zeek logs storage and Suricata metadata?

Zeek logs storage and Suricata metadata are best managed with columnar storage network formats like Parquet network metadata or Delta Lake flows. Apache Kafka streams network and Iceberg bulk upload support high-velocity network data.

Protocol buffers network or JSON flow export ensure compatibility. Daily bucket flows, timestamp partitioning, and versioning flow data allow fast historical query net and accurate replay forensics meta.

How can I manage privacy and compliance while storing network metadata?

To maintain privacy and compliance, apply privacy payload strip and GDPR network logs policies when storing network metadata. Threat intel flows and structured storage query practices further enhance security.

Edge device storage and sampling network traffic reduce exposure, while anonymizing src dst IP storage and port protocol db entries protects sensitive data without interfering with performance monitoring meta or anomaly detection metadata.

What tools help optimize query and analytics on high-ingestion network metadata?

High-ingestion network metadata can be efficiently queried using ClickHouse network analytics, TimescaleDB packets, or InfluxDB netflow.

Full-text search network, RAM usage flows optimization, auto-scaling metadata, and lightweight metrics db support real-time dashboards net. Windowed aggregation flows, burst load processing, small writes Delta, and compressed flow size improve performance while minimizing CPU overhead compress.

Storing and Processing Network Metadata

Storing and processing network metadata effectively relies on a metadata-first approach, structured storage, and scalable pipelines. Combining time-series databases, columnar formats, and edge caches allows real-time detection, forensic readiness, and historical analysis.

Following best practices, partitioning, compression, retention policies, and enrichment during ingestion, ensures performance and compliance. In our experience, these strategies reduce overhead while improving visibility across enterprise networks. Explore our complete network analytics framework for actionable insights.

References

- https://d3fend.mitre.org/technique/d3f%3AProtocolMetadataAnomalyDetection/

- https://en.wikipedia.org/wiki/Metadata_repository